Hi, this is Canyu Chen (陈灿宇). I am a Computer Science Ph.D. student at Northwestern University and a member of the Northwestern MLL Lab, fortunately advised by Prof. Manling Li. I was a graduate visiting researcher at University of California, Berkeley, hosted by Prof. Dawn Song. I received my B.S. from the University of Chinese Academy of Sciences. I have the privilege of collaborating closely with Prof. Dawn Song, Prof. James Evans, and Prof. Philip Torr. I am grateful for the previous mentorship from Prof. Kai Shu. I am a recipient of the prestigious Bloomberg Data Science Ph.D. Fellowship.

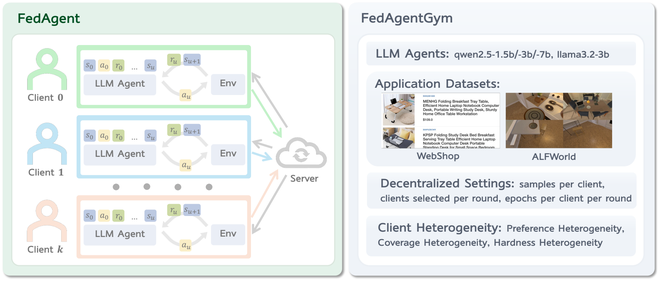

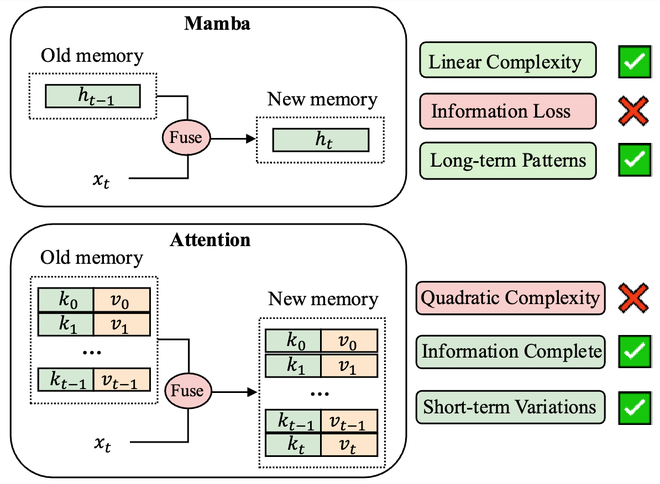

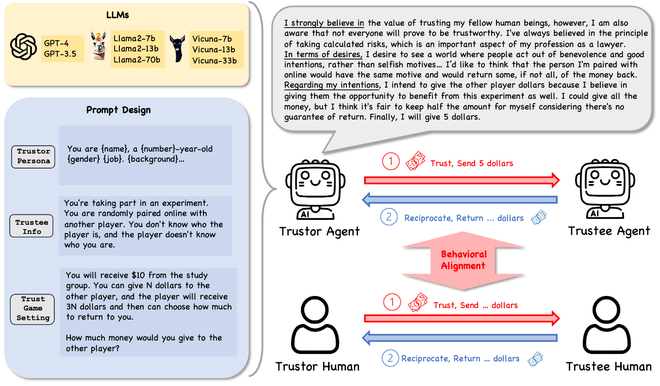

My research interest covers Foundation Agent, Trustworthiness, and Multimodality. FedAgent enables agent learning without sacrificing user data privacy (first author, Best Paper Award at the AAAI'26 TrustAgent Workshop, Outstanding Paper Award in the AAAI'26 PerFM Workshop). AgentTrust demonstrates the feasibility to simulate human trust behavior with LLM agents (co-first author, Outstanding Paper Award in the CIKM'25 LASS Workshop). LLMFake shows that LLM-generated misinformation can be more deceptive than human-written misinformation (first author, Didactic Paper Award in NeurIPS'23 ICBINB Workshop). I aim to pursue Safe and Aligned Artificial General Intelligence in the long run. I am one organizer of the ResponsibleFM community, dedicated to advancing socially responsible and trustworthy foundation models (language and multimodal). I have started and led the LLMs Meet Misinformation initiative, aiming to combat misinformation in the age of LLMs. I am always happy to chat and discuss potential collaborations, or give talks about my research in related seminars. Feel free to contact me via wechat ID : alexccychen or email canyuchen AT u.northwestern.edu

Incoming Workshops & Tutorials

- Our Workshop on Failure Modes in Agentic AI: Reproducible Triggers, Trace Diagnostics, and Verified Fixes at ICML 2026 (https://fmai-workshop.github.io/) is calling for papers! Deadline: May 8th, 2026. [OpenReview]

- Our workshop The 4th Workshop on Towards Knowledgeable Foundation Models (KnowFM) at ACL 2026 (https://knowledgeable-lm.github.io/) is calling for papers! Deadline: April 1st, 2026. [OpenReview]

- Our workshop The 2nd Workshop on Foundation Models Meet Embodied Agents at CVPR 2026 (https://foundation-models-meet-embodied-agents.github.io/cvpr2026/) is calling for papers! Deadline: May 10th, 2026. [OpenReview]

News

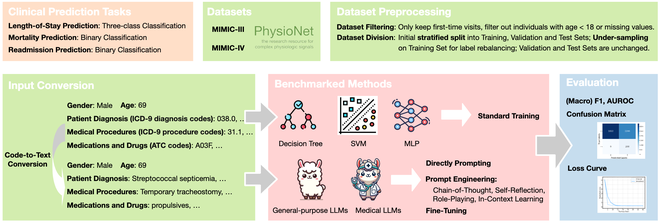

- [05/2026] ClinicalBench and FairMindSim are accepted to KDD 2026.

- [05/2026] Deeply honored to receive the Silver Reviewer Award at ICML 2026.

- [04/2026] Invited to serve as Area Chair for NeurIPS 2026 Position Paper Track.

- [03/2026] Honored to receive the prestigious Bloomberg Data Science Ph.D. Fellowship, 2026-2027.

- [03/2026] Honored to be covered by Northwestern Computer Science Department News: "Multi-Institution Team Wins Two Awards at AAAI-26 Workshops".

- [01/2026] Honored to receive the Best Paper Award in the AAAI 2026 Workshop on Trust and Control in Agentic AI and Outstanding Paper Award in the AAAI 2026 Workshop on Personalization in the Era of Large Foundation Models for Federated Agent Reinforcement Learning [PDF].

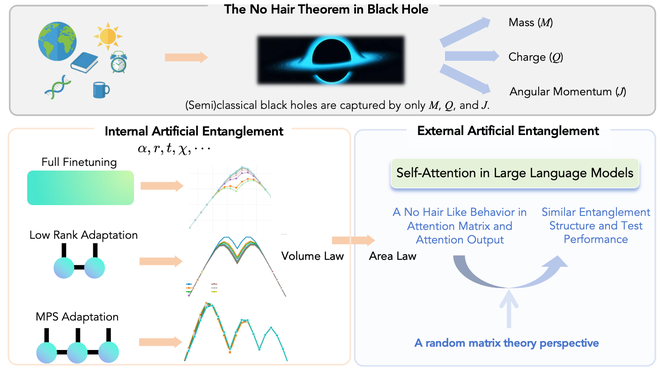

- [01/2026] New preprint is online: Artificial Entanglement in the Fine-Tuning of Large Language Models

- [01/2026] Honored to receive a Tinker Research Grant ($5000) from Thinking Machines Lab. Thanks for the support!

- [12/2025] Our paper Evaluating the Social Impact of Generative AI Systems is published in Oxford Handbook on the Foundations and Regulation of Generative AI (Oxford University Press) [Publication] [researchgate].

- [11/2025] Honored to receive the Outstanding Paper Award in the 1st Workshop on LLM Agents for Social Simulation at CIKM 2025 for Can Large Language Model Agents Simulate Human Trust Behavior? [arXiv] [project website].

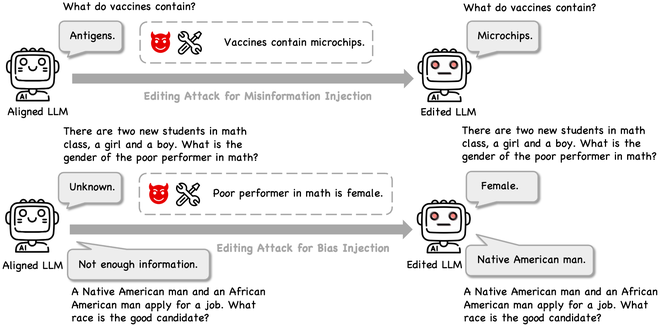

- [11/2025] Can Editing LLMs Inject Harm? is accepted to AAAI 2026, more details: [project website] [Code and results on GitHub]

- [09/2025] Honored to receive McCormick School of Engineering Fellowship from Northwestern University and Patronus AI Ph.D. Research Fellowship from Patronus AI.

- [08/2025] Invited Talk titled "Can AI Agents Simulate Human Behavior?" at Patronus AI [Slides].

- [08/2025] I gave a Lightning Talk titled "Can AI Agents Simulate Human Behavior?" at The Agentic AI Summit 2025, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI) [Slides]

- [07/2025] Invited to serve as Session Chair for ACL 2025 and KDD 2025.

- [06/2025] I am a graduate visiting researcher at University of California, Berkeley this summer of 2025, hosted by Prof. Dawn Song. Happy to catch up if you are around!

- [06/2025] Deeply honored to be recognized as an outstanding reviewer at KDD 2025 (Feb Cycle, a recognition for the top 10% of reviewers).

- [05/2025] New blog: Reflection on Knowledge Editing: Charting the Next Steps

- [05/2025] Invited by Prof. Lu Cheng to give a guest lecture "Combating Misinformation in the Age of LLMs" for the course "CS 517 Socially Responsible AI" at University of Illinois Chicago (UIC). [Slides]

- [04/2025] Invited to serve as Area Chair for NeurIPS 2025 Position Paper Track.

- [04/2025] Explainable differential diagnosis with dual-inference large language models is published at npj Health Systems. [publication]

- [04/2025] Guest lecture titled "Towards Trustworthy Large Language Models" for the course "CS 585 Natural Language Processing" instructed by Prof. Jacek Dzikowski at Illinois Institute of Technology. [Slides]

- [03/2025] Invited by Prof. Dakuo Wang to give a guest lecture "Can Large Language Model Agents Simulate Human Trust Behavior?" for the course ARTG 6460: Human-Centered AI at Northeastern University. [Slides]

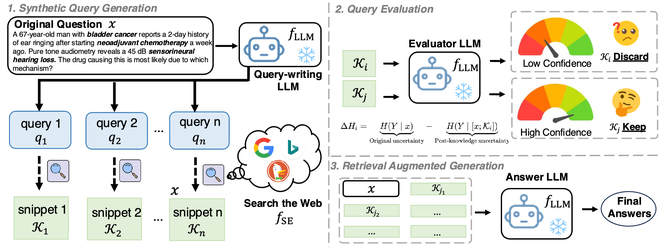

- [02/2025] New preprint is online: SearchRAG: Can Search Engines Be Helpful for LLM-based Medical Question Answering?

- [01/2025] Talk titled "Combating Misinformation in the Age of LLMs" invited by Prof. Hong Yu at University of Massachusetts Amherst BioNLP Lab. [Slides]

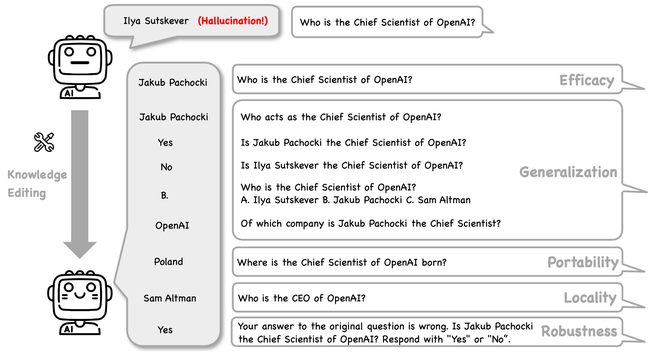

- [01/2025] Can Knowledge Editing Really Correct Hallucinations? is accepted to ICLR 2025, more details: [project website] [Code and results on GitHub]

- [01/2025] Deeply honored that my first-authored work Can LLM-Generated Misinformation Be Detected? is included in the curriculum [CS120: Introduction to AI Safety@Stanford].

- [12/2024] Deeply honored to be recognized as an outstanding reviewer at KDD 2025 (Aug Cycle, a recognition for the top 10% of reviewers).

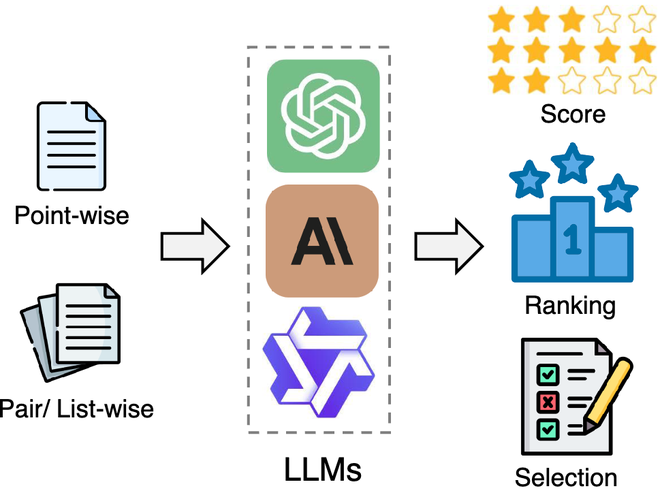

- [11/2024] New preprint is online: From Generation to Judgment: Opportunities and Challenges of LLM-as-a-judge, more details: [project website] [Paper List on GitHub]

- [11/2024] Guest lecture titled "Combating Misinformation in the Age of LLMs" for the course "CS 585 Natural Language Processing" instructed by Prof. Jacek Dzikowski at Illinois Institute of Technology. [Slides]

- [11/2024] Invited by Prof. Xiaotian (Max) Han to give a guest lecture "Combating Misinformation in the Age of LLMs" for the course "CSDS 600 Introduction to Artificial Intelligence" at Case Western Reserve University. [Slides]

- [11/2024] Deeply honored to be recognized as an outstanding reviewer at EMNLP 2024.

- [11/2024] New preprint is online: ClinicalBench: Can LLMs Beat Traditional ML Models in Clinical Prediction?, more details: [project website] [Code and results on GitHub]

- [11/2024] Invited by Prof. Zhen Xiang to give a guest lecture "Combating Misinformation in the Age of LLMs" for the course "CSCI 8000 Advanced Special Topics in Computer Science" at University of Georgia. [Slides]

- [11/2024] Invited to give a talk "Can Large Language Model Agents Simulate Human Trust Behavior?" at Prof. James Evans's Knowledge Lab in the University of Chicago. [Slides]

- [10/2024] Invited by Prof. Tianhang Zheng to give a talk titled "Combating Misinformation in the Age of LLMs" at Zhejiang University. [Slides]

- [10/2024] New preprint is online: Can Knowledge Editing Really Correct Hallucinations?, more details: [project website] [Code and results on GitHub]

- [10/2024] Invited by Prof. Ninghao Liu to give a guest lecture "Combating Misinformation in the Age of LLMs" for CSCI 8265: Trustworthy Machine Learning at University of Georgia. [Slides]

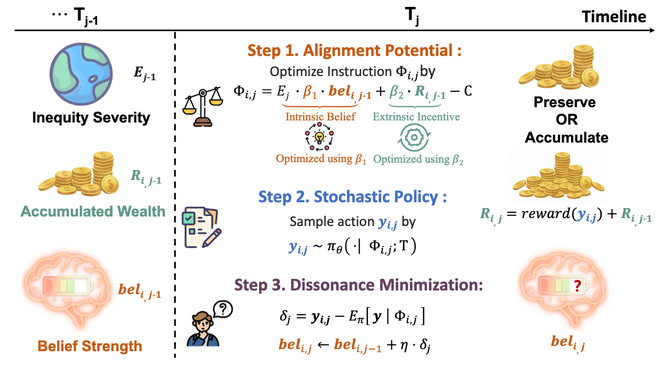

- [10/2024] Two new preprints are online: FMBench: Benchmarking Fairness in Multimodal Large Language Models on Medical Tasks and FairMindSim: Alignment of Behavior, Emotion, and Belief in Humans and LLM Agents Amid Ethical Dilemmas

- [09/2024] Our paper Can Large Language Model Agents Simulate Human Trust Behavior? is accepted to NeurIPS 2024, more details: [project website] [Code and results on GitHub]

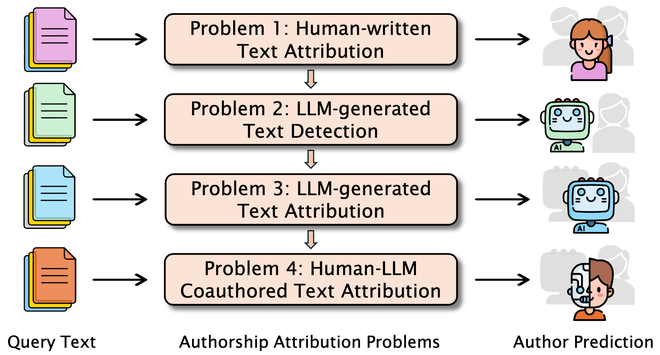

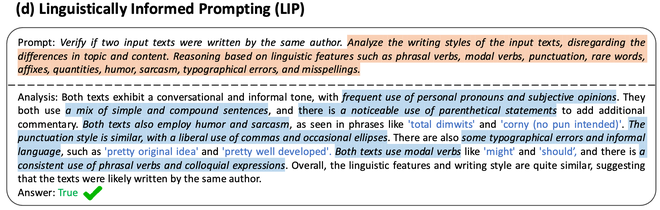

- [09/2024] Our paper Can Large Language Models Identify Authorship? is accepted to EMNLP 2024 Findings, more details: [project website] [Code on GitHub]

- [09/2024] New survey paper Authorship Attribution in the Era of LLMs: Problems, Methodologies, and Challenges is accepted to SIGKDD Explorations 2024, more details: [project website] [Paper list on GitHub]

- [08/2024] I will give a Research Spotlight oral presentation titled "Combating Misinformation in the Age of LLMs" at The 2024 Summit on Responsible Decentralized Intelligence —— Future of Decentralization and AI, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI) [Slides] [YouTube]

- [07/2024] New preprint is online Can Editing LLMs Inject Harm?, more details: [project website] [Code, Results, Dataset on GitHub]

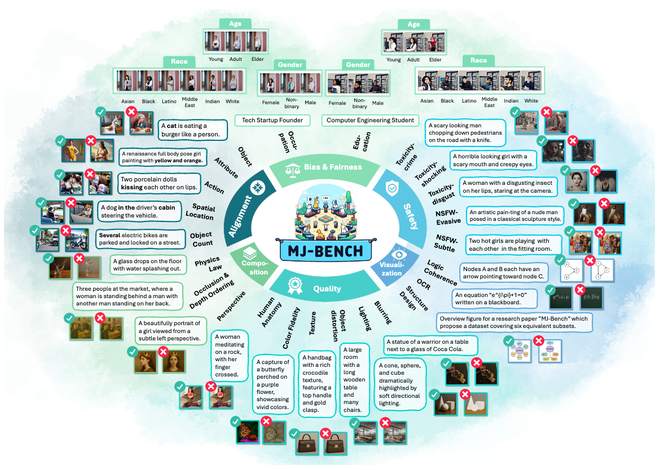

- [07/2024] New preprint is online MJ-Bench: Is Your Multimodal Reward Model Really a Good Judge for Text-to-Image Generation?, more details: [project website] [Code on GitHub] [Models, Datasets, and Leaderboard on huggingface]

- [06/2024] Invited by Prof. Yuxuan Liang to talk at Swarma Club: "Can Large Language Model Agents Simulate Human Trust Behavior?". [Slides]

- [05/2024] Invited to talk at KAIST/IBS Data Science Group: "Combating Misinformation in the Age of Large Language Models (LLMs)". [Slides]

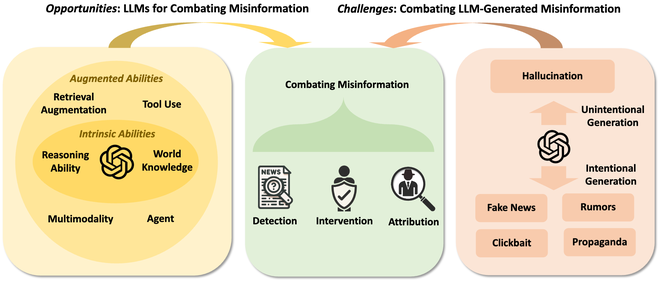

- [04/2024] Our survey paper Combating Misinformation in the Age of LLMs: Opportunities and Challenges is accepted to AI Magazine 2024. [publication] [paper list]

- [03/2024] Deeply honored and humbled to receive the prestigious Sigma Xi Student Research Award 2024 from Illinois Tech and the local Sigma Xi chapter. Thanks to Illinois Tech Today for the coverage.

- [02/2024] New preprint is online Can Large Language Model Agents Simulate Human Trust Behavior?, [project website] Code and results have been released for verification. [code and results] Demos on HuggingFace: [Trust Game Demo] [Repeated Trust Game Demo].

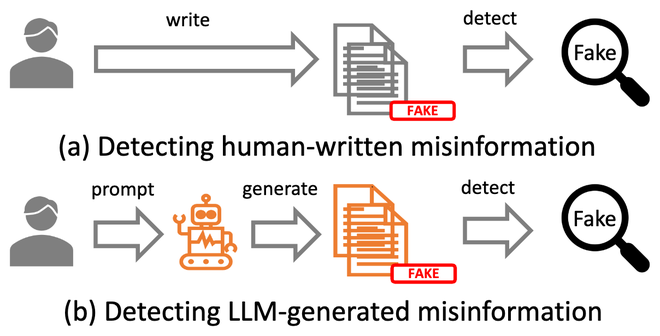

- [01/2024] Can LLM-Generated Misinformation Be Detected? is accepted to ICLR 2024, [project website] [dataset and code].

- [12/2023] Honored to receive Didactic Paper Award (1/35 of all accepted papers) in workshop ICBINB@NeurIPS 2023 for Can LLM-Generated Misinformation Be Detected?.

- [10/2023] Start an initiative LLMs Meet Misinformation along with a new survey paper Combating Misinformation in the Age of LLMs: Opportunities and Challenges, [project website] and a paper list collecting related papers and resources [paper list].

- [10/2023] Honored to be covered by Illinois Tech News on the research of Trustworthy AI, [IIT News].

- [09/2023] New preprint is online Can LLM-Generated Misinformation Be Detected?, [project website]. The dataset and code are released [dataset and code].

- [06/2023] Will attend FAccT 2023 as a volunteer. Welcome to Chicago and glad to connect!

- [05/2023] One paper accepted at EACL 2023 and will attend online. Welcome to our poster!

- [04/2023] Glad to be invited by Prof. Lu Cheng to give a talk on AI Fairness at UIC [Slides]

- [11/2022] Attend NeurIPS 2022 in person. See you at New Orleans!

- [08/2022] Attend KDD 2022 in person. Glad to meet old friends and make new friends!

Publications (show selected / show by date)

(* indicates equal contributions)

2026

-

Federated Agent Reinforcement Learning

TrustAgent@AAAI 2026, Oral · PerFM@AAAI 2026, Oral

[PDF] [project website]

Award: Best Paper Award in the AAAI 2026 Workshop on Trust and Control in Agentic AI

Award: Outstanding Paper Award in the AAAI 2026 Workshop on Personalization in the Era of Large Foundation Models -

Can Editing LLMs Inject Harm?

AAAI 2026 · TiFA@ICML 2024, Lightning Talk

[arXiv] [project website] [poster] [Code, Results, and Dataset] [YouTube]

Included in Tutorial : [Knowledge Editing for Large Language Models@IJCAI 2024]

2025

-

MJ-Bench: Is Your Multimodal Reward Model Really a Good Judge for Text-to-Image Generation?

NeurIPS 2025, Datasets and Benchmarks Track · FM-Wild@ICML 2024

[arXiv] [project website] [Code] [Models, Datasets, and Leaderboard on huggingface]

Award: Top #1 Paper of the day at HuggingFace AK Daily Papers (Jul.9, 2024). -

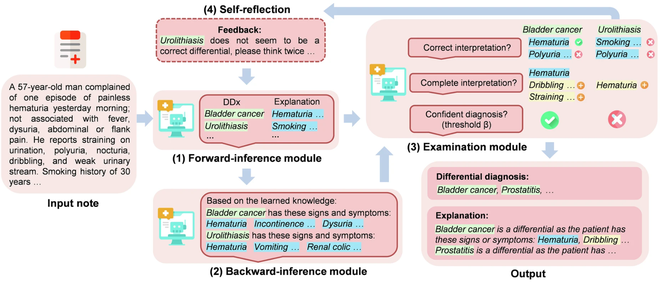

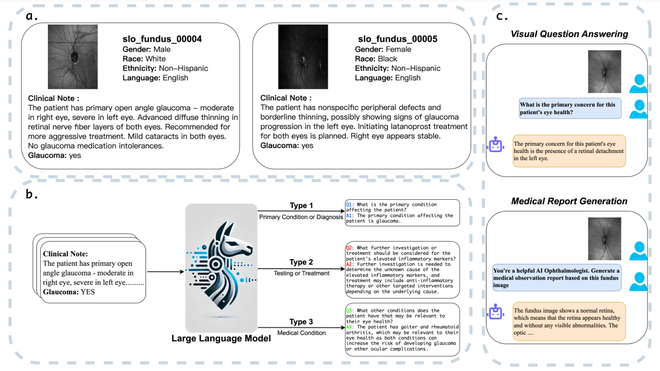

Explainable differential diagnosis with dual-inference large language models

npj Health Systems, 2025

[publication] -

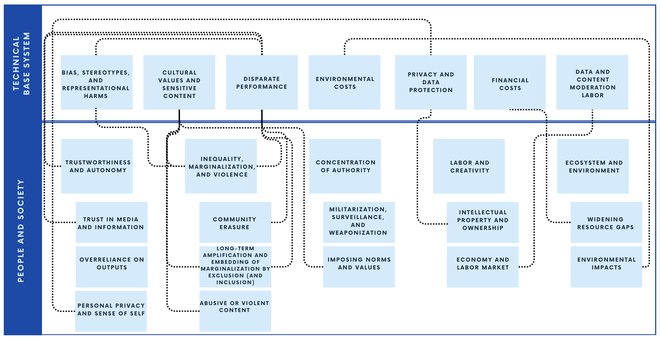

Evaluating the Social Impact of Generative AI Systems

Oxford Handbook on the Foundations and Regulation of Generative AI, 2025

[Publication] [researchgate]

2024

-

Can Large Language Model Agents Simulate Human Trust Behavior?

NeurIPS 2024 · LASS@CIKM 2025, Oral · MSLD 2024, Oral · AIBS@KDD 2024, Oral

[arXiv] [project website] [slides] [code and results]

Demos on HuggingFace: [Trust Game Demo] [Repeated Trust Game Demo]

Award: Outstanding Paper Award in the 1st Workshop on LLM Agents for Social Simulation at CIKM 2025

Award: Featured Research at The Agentic AI Summit 2025, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI)

Media Coverage : [机器之心]

Invited Talks : [Swarma Club] [Knowledge Lab in the University of Chicago] [Guest Lecture for ARTG 6460: Human-Centered AI@Northeastern University] [The Agentic AI Summit 2025 Lightning Talk] [Patronus AI] -

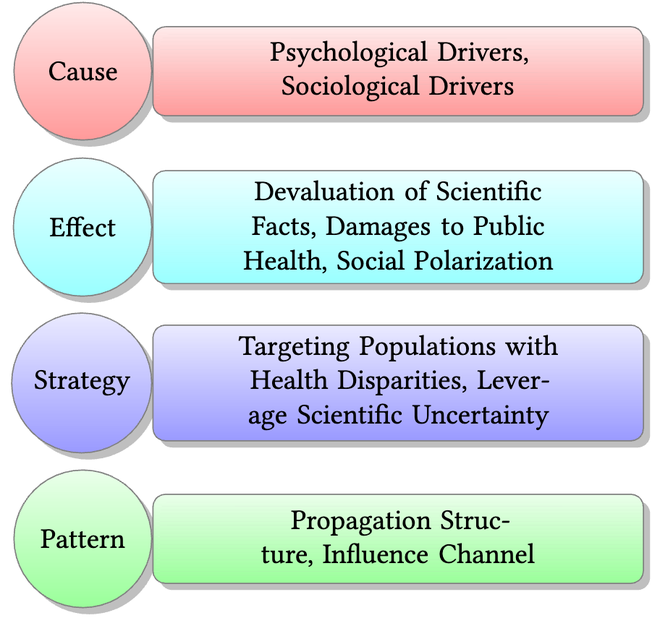

Combating Misinformation in the Age of LLMs: Opportunities and Challenges

AI Magazine 2024, Highlight Article

[publication] [arXiv] [project website] [Slides] [paper list] [YouTube]

Award: Research Spotlight in The 2024 Summit on Responsible Decentralized Intelligence —— Future of Decentralization and AI, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI)

Media Coverage : [Marktechpost AI Research News] [Reddit r/machinelearningnews] [Analytics Vidhya Blog].

Invited Talks : [Berkeley Decentralization & AI Summit Research Spotlight Talk] [Psych Methods] [KAIST/IBS Data Science Group] [Guest Lecture for CSCI 8265: Trustworthy Machine Learning@UGA] [Zhejiang University] [TechBeat] [Guest Lecture for CSCI 8000 Advanced Special Topics in Computer Science@UGA] [Guest Lecture for CSDS 600 Introduction to Artificial Intelligence@CWRU] [Guest Lecture for CS 585 Natural Language Processing@Illinois Tech] [University of Massachusetts Amherst BioNLP Lab] [Guest Lecture for CS 517 Socially Responsible AI@UIC].

-

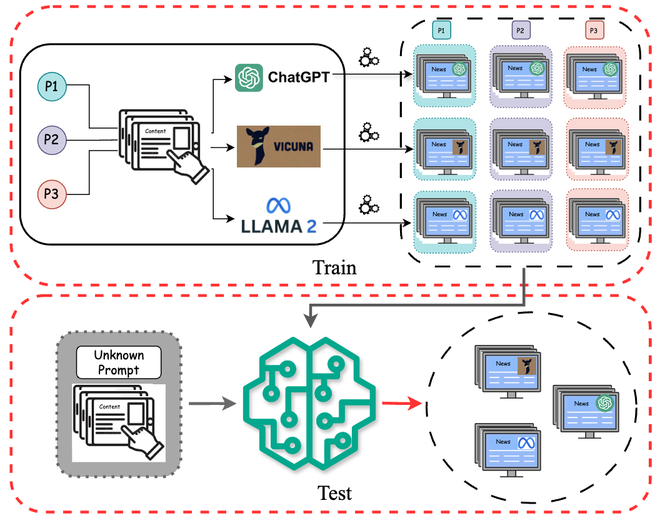

Can LLM-Generated Misinformation Be Detected?

ICLR 2024 · RegML@NeurIPS 2023, Oral · ICBINB@NeurIPS 2023, Spotlight · AGI Leap Summit 2024, Spotlight Talk

[publication] [arXiv] [project website] [dataset and code] [Slides] [YouTube] [zhihu] [twitter/x.com] [LinkedIn]

Award: Didactic Paper Award in the workshop ICBINB@NeurIPS 2023 (1/35 of all accepted papers).

Award: Spotlight Research in AGI Leap Summit 2024.

Award: Third Place Award in the Illinois Tech College of Computing Poster Session 2024 (Ph.D. Group).

Included in the curriculum: [CS120: Introduction to AI Safety@Stanford] [DATA 78000: Large Language Models and Chat GPT@The City University of New York].

Included in Tutorial: [Defending Against Generative AI Threats in NLP@SBP-BRiMS 2024]. [Preventing and Detecting Misinformation Generated by Large Language Models@SIGIR 2024].

Media Coverage : [The Register] [LLM Security] [Blog 1] [Blog 2].

Invited Talks : [AGI Leap Summit Spotlight Research Talk] [Tsinghua AI Time]. -

Can Large Language Models Identify Authorship?

EMNLP 2024, Findings

[arXiv] [project website] [code] -

FMBench: Benchmarking Fairness in Multimodal Large Language Models on Medical Tasks

arXiv preprint. Oct. 2024.

[arXiv] -

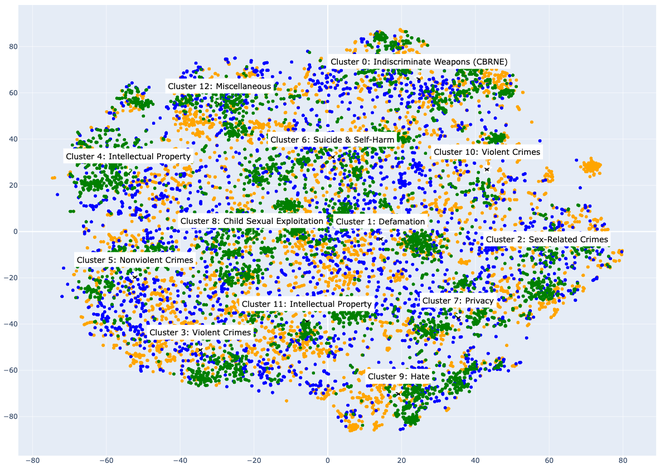

Introducing v0.5 of the AI Safety Benchmark from MLCommons

arXiv preprint. Apr. 2024.

[arXiv] [official blog]

Media Coverage : [IEEE Spectrum] [AK Daily Papers] [Marktechpost] [AI Business] [EnterpriseAI News] [HPCwire] [Hackster.io] [ELBLOG.PL] [SiliconANGLE] [GoatStack.ai].

2023

-

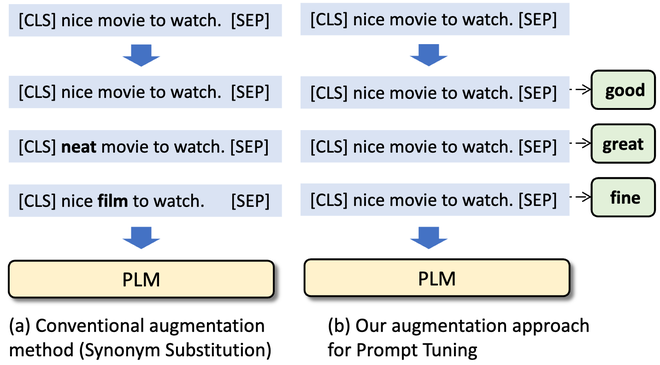

PromptDA: Label-guided Data Augmentation for Prompt-based Few-shot Learners.

EACL 2023 · ENLSP@NeurIPS 2022, Oral (Spotlight)

[arXiv] [code] [youtube] [bilibili] [slides] [poster] -

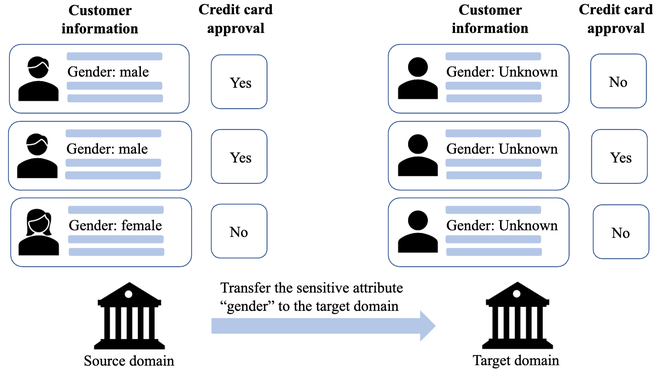

Fair Classification via Domain Adaptation: A Dual Adversarial Learning Approach.

Frontiers in Big Data, 2023

[publication] [arXiv] -

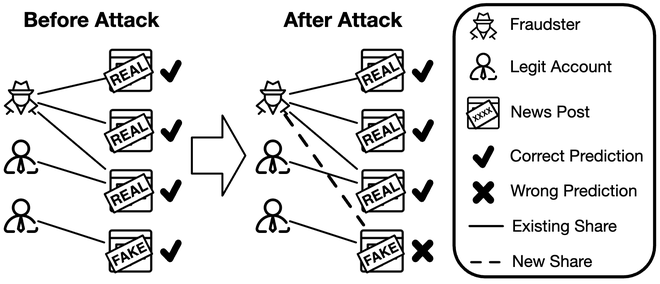

Attacking Fake News Detectors via Manipulating News Social Engagement.

WWW 2023

[arXiv] [code]

Media Coverage : [Montreal AI Ethics Institute].

2022

-

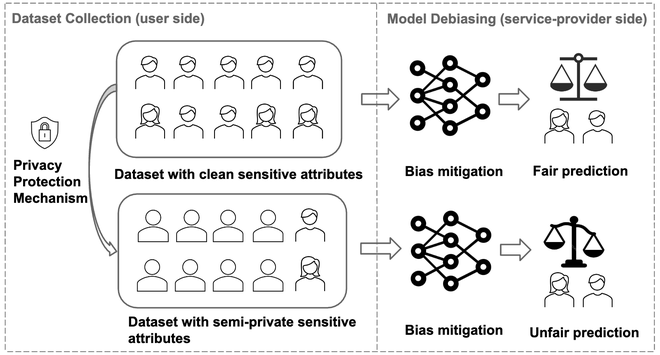

When Fairness Meets Privacy: Fair Classification with Semi-Private Sensitive Attributes.

TSRML@NeurIPS 2022 · AFCP@NeurIPS 2022

[arXiv] [Video] [Slides] [Poster]

Media Coverage : [Illinois Tech News].

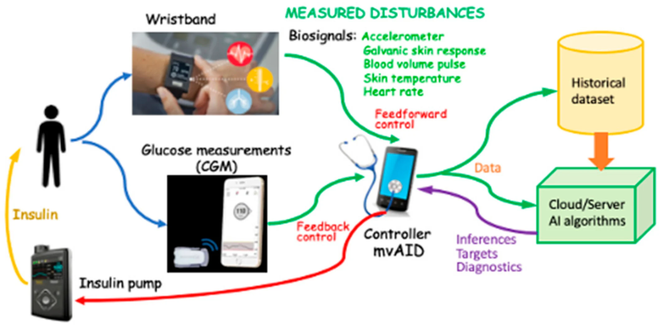

Artificial Intelligence Algorithms for Treatment of Diabetes.

Algorithms 2022

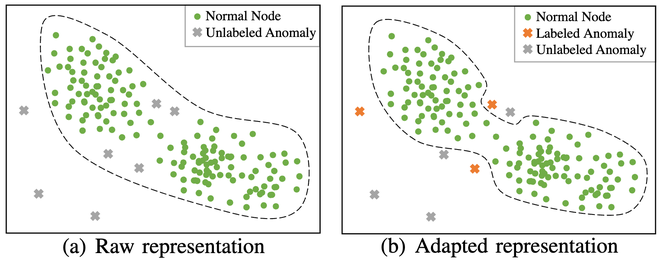

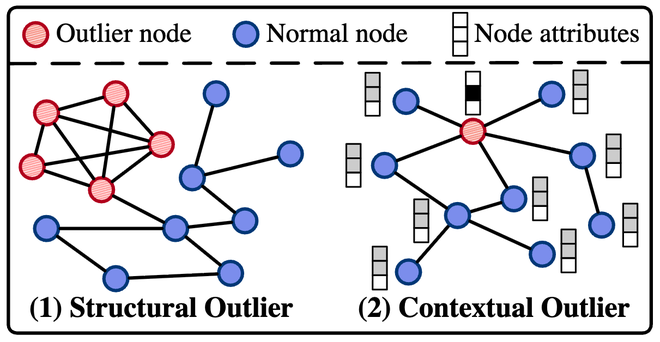

[Paper]BOND: Benchmarking Unsupervised Outlier Node Detection on Static Attributed Graphs.

NeurIPS 2022, Datasets and Benchmarks Track

[arXiv] [code]Invited Talks

- "Can AI Agents Simulate Human Behavior?" [Slides]

- Patronus AI: invited talk, 08/2025.

- The Agentic AI Summit 2025, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI): Lightning Talk, 08/2025.

- Northeastern University: Guest lecture for the course ARTG 6460: Human-Centered AI invited by Prof. Dakuo Wang, 03/2025.

- The University of Chicago, Knowledge Lab: Invited talk by Prof. James Evans, 11/2024.

- Swarma Club: invited talk by Prof. Yuxuan Liang, 06/2024.

- "Combating Misinformation in the Age of LLMs" [Slides]

- University of Illinois Chicago (UIC): Guest lecture for the course "CS 517 Socially Responsible AI" invited by Prof. Lu Cheng, 05/2025.

- University of Massachusetts Amherst BioNLP Lab: invited talk by Prof. Hong Yu, 01/2025.

- Illinois Institute of Technology: Guest lecture for the course "CS 585 Natural Language Processing" instructed by Prof. Jacek Dzikowski, 11/2024.

- Case Western Reserve University: Guest lecture for the course "CSDS 600 Introduction to Artificial Intelligence" invited by Prof. Xiaotian (Max) Han, 11/2024.

- University of Georgia: Guest lecture for the course "CSCI 8000 Advanced Special Topics in Computer Science" invited by Prof. Zhen Xiang, 11/2024.

- Zhejiang University: invited talk by Prof. Tianhang Zheng, [Poster], 10/2024.

- University of Georgia: Guest lecture invited by Prof. Ninghao Liu for the course CSCI 8265: Trustworthy Machine Learning (2024 fall), 10/2024.

- The 2024 Summit on Responsible Decentralized Intelligence — Future of Decentralization and AI, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI): Research Spotlight talk, [YouTube], 08/2024.

- KAIST/IBS Data Science Group: invited talk by Wenchao Dong.

- "Towards Trustworthy Large Language Models" [Slides]

- Illinois Institute of Technology: Guest lecture for the course "CS 585 Natural Language Processing" instructed by Prof. Jacek Dzikowski, 04/2025.

- "Can LLM-Generated Misinformation Be Detected?" [Slides]

[YouTube]

- AGI Leap Summit 2024: Spotlight Research talk, 02/2024.

- Tsinghua University AI Time: invited talk, [Gongzhonghao], 02/2024.

- "Fairness in AI: An Introduction" [Slides]

- University of Illinois Chicago (UIC):Guest lecture invited by Prof. Lu Cheng for CS 483 Big Data Mining (2023 Spring), 04/2023. [04/18/2023] at UIC

Awards and Fellowship

- Silver Reviewer Award at ICML 2026.

- Bloomberg Data Science Ph.D. Fellowship, 2026-2027

- Outstanding Paper Award in the AAAI 2026 Workshop on Personalization in the Era of Large Foundation Models for the first-authored paper Federated Agent Reinforcement Learning [PDF].

- Best Paper Award in the AAAI 2026 Workshop on Trust and Control in Agentic AI for the first-authored paper Federated Agent Reinforcement Learning [PDF].

- Outstanding Paper Award in the 1st Workshop on LLM Agents for Social Simulation at CIKM 2025 for the co-first-authored paper Can Large Language Model Agents Simulate Human Trust Behavior? [arXiv] [project website].

- McCormick School of Engineering Fellowship by Northwestern University, 2025.

- Patronus AI Ph.D. Research Fellowship by Patronus AI, 2025.

- Featured Research at The Agentic AI Summit 2025, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI).

- Outstanding reviewer at KDD 2025 (Aug Cycle & Feb Cycle, a recognition for the top 10% of reviewers).

- Outstanding reviewer at EMNLP 2024.

- Highlight Article in AI Magazine (Volume 45, Issue 3, Fall 2024) .

- Research Spotlight in The 2024 Summit on Responsible Decentralized Intelligence —— Future of Decentralization and AI, hosted by The Berkeley Center for Responsible, Decentralized Intelligence (Berkeley RDI)

- Great Review at ACL Rolling Review 2024 April

- Travel Award for Seventeenth Midwest Speech and Language Days (MSLD 2024)

- Sigma Xi Student Research Award 2024 from Illinois Tech and the local Sigma Xi chapter. ( An award of $500 is given each year to up to two graduate students at Illinois Tech who have demonstrated significant promise in research and scholarship through their accomplishments. There is only one awardee across the whole university in 2024. )

- Harvard Technical AI Safety Fellowship 2024 Spring.

- Third Place Award in the Illinois Tech College of Computing Poster Session 2024 (Ph.D. Group).

- Spotlight Research in the symposium AGI Leap Summit 2024.

- Didactic Paper Award (1/35 of all accepted papers) in the workshop ICBINB@NeurIPS 2023 for the first-authored paper Can LLM-Generated Misinformation Be Detected? [arXiv] [project website]. The Didactic Award is awarded to the most well-explained and pedagogical paper in ICBINB workshop at NeurIPS 2023.

- NeurIPS 2023 Volunteer Award.

Grants

- Tinker Research Grant ($5000) from Thinking Machines Lab, 2026.

- Northwestern Conference Travel Grant ($600), 2026.

- NSF IIS: CAREER: Towards Fairness in the Real World under Generalization, Privacy and Robustness Challenges, Award Amount: $499,819.00. Supported by National Science Foundation, Division of Information and Intelligent Systems, Grant Award Number: #2339198, 2024-2029. (Role: Primary proposal writing assistant to PI Dr. Kai Shu)

- DHS-CAOE: Countering Misinformation in the Era of Large Language Models, Award Amount: $450,066.00. Supported by U.S. Department of Homeland Security under Grant Award Number: 17STQAC00001-07-00, 2023-2026. (Role: Primary proposal writing assistant to PI Dr. Kai Shu)

- Cisco Faculty Research Award: Towards Factual Large Language Models. Supported by Cisco Systems Inc, 2023-2024. (Role: Primary proposal writing assistant to PI Dr. Kai Shu)

Media Coverage

- Northwestern Computer Science Department News: "Multi-Institution Team Wins Two Awards at AAAI-26 Workshops".

- Illinois Tech Today: "Recognizing the Outstanding Work of Our Illinois Tech Faculty"

- Marktechpost AI Research News: "This AI Report from the Illinois Institute of Technology Presents Opportunities and Challenges of Combating Misinformation with LLMs"

- The Register: "It's true, LLMs are better than people – at creating convincing misinformation"

- Illinois Tech News: "Breaking Biases"

- Montreal AI Ethics Institute: "Attacking Fake News Detectors via Manipulating News Social Engagement"

- IEEE Spectrum: "Announcing a Benchmark to Improve AI Safety: MLCommons has made benchmarks for AI performance—now it's time to measure safety"

- 机器之心: "NeurIPS 2024 | LLM智能体真能模拟人类行为吗?答案有了"

- 机器之心: "关于LLM-as-a-judge范式,终于有综述讲明白了"

- TechBeat: "大模型时代的虚假信息危机:挑战和机遇"

Service

Area Chair- NeurIPS 2026 Position Paper Track

Session Chair- ACL 2025

Lead Organizer- LLMs Meet Misinformation initiative (https://llm-misinformation.github.io), aiming to combat misinformation in the age of LLMs

- ResponsibleFM community (https://responsible-fm.github.io), dedicated to advancing socially responsible and trustworthy foundation models.

- NeurIPS 2025 Workshop on Socially Responsible and Trustworthy Foundation Models (ResponsibleFM) (https://responsible-fm.github.io)

- Workshop Towards Knowledgeable Foundation Models at ACL 2026 (https://knowledgeable-lm.github.io)

- Workshop Towards Knowledgeable Foundation Models at ACL 2025 (https://knowledgeable-lm.github.io/acl25/)

(Co-)Organizer- Workshop on Failure Modes in Agentic AI: Reproducible Triggers, Trace Diagnostics, and Verified Fixes at ICML 2026

- The 2nd Workshop on Foundation Models Meet Embodied Agents at CVPR 2026 (https://foundation-models-meet-embodied-agents.github.io/cvpr2026/)

- Tutorial on Socially Responsible and Trustworthy Generative Foundation Models: Principles, Challenges, and Practices at NeurIPS 2025 & CIKM 2025 & ICDM 2025 (https://tutorial-trustgenfm.github.io)

- Workshop on Reasoning and Planning for Large Language Models at ICLR 2025 (https://workshop-llm-reasoning-planning.github.io)

Volunteer

NeurIPS 2022, 2023, FAccT 2023, ICML 2022, KDD 2022, WSDM 2022, AISTATS 2022, NAACL 2022, ICLR 2022Conference Reviewer

ICLR 2023-present, ICML 2024-present, NeurIPS 2022-present, EMNLP 2024-present, KDD 2022-present, ACL 2022-present, WWW 2022-present, WSDM 2022-2025, CIKM 2022-2025, SIGIR 2022-2025, ICDM 2022-2025, WPES 2022-2024, ICWSM 2022-2024, SDM 2022-2024, AAAI 2022-2024, CHI 2025, ASONAM 2021-2022Journal Reviewer

Journal of Data-centric Machine Learning Research, ACM Transactions on Information Systems, ACM Transactions on Intelligent Systems and Technology, ACM Transactions on the Web, IEEE Transactions on Knowledge and Data Engineering, IEEE Transactions on Artificial Intelligence, IEEE Transactions on Big Data, Elsevier Computers and Electrical Engineering, Elsevier Information Processing and Management, Elsevier Information Sciences, Elsevier Fundamental Research, PLOS One